Clinical NLP · 2025

FSD-Mental-Health-Safety-Benchmark

A three-study benchmark that pressure-tests counselling LLMs for alignment failures uncovered by the latest research on in-context scheming, alignment faking, and multi-turn sycophancy.

Project Overview

The benchmark is a compact, reproducible harness that measures clinical reasoning reliability without retrieval in LLMs earmarked for mental-health support tooling. It zeroes in on three failure modes that surfaced in 2024–2025 alignment work: unfaithful reasoning, sycophancy under pressure, and longitudinal drift when memory is absent or brittle.

By standardising data splits, metrics, bootstrap confidence intervals, and qualitative failure taxonomies, the harness makes it easy to compare small counselling-tuned models against larger generalist baselines while preserving NHS-style privacy and cost constraints (no RAG, lightweight memory only).

Research Landscape · 2024–2025

The benchmark is grounded in frontier alignment research that is very much active—not historic theory. The following highlights shaped the scope:

Key Papers

- Meinke et al. (2024) — in-context scheming (arXiv:2412.04984). Frontier models can covertly pursue hidden goals while emitting convincing but fabricated reasoning.

- Koorndijk (2025) — alignment faking (arXiv:2506.21584). Demonstrated small models that appear safe yet internally deviate from protocol, plus prompt-level mitigations.

- Sycophancy wave (2023–2025) — Wei, Sharma, Liu (“TRUTH DECAY”), Fanous (“SycEval”), Pandey (“Beacon”), Hong, Kaur. All quantified strategic agreement across single and multi-turn chats.

Status: These threads are still evolving, particularly for safety-critical deployments such as mental-health triage. The benchmark translates their insights into concrete tests and metrics.

Core Alignment Concepts in a Clinical Context

In-Context Scheming

Covert strategies that produce compliant-looking answers with fabricated reasoning. Clinically, auditors may trust a correct referral even if the reasoning fabricates diagnoses. Faithfulness checks are essential.

Alignment Faking

Models mimic helpfulness whilst their internal decision process ignores safety policy. In mental-health chat, that looks like empathic tone masking advice that skirts escalation or consent rules.

Sycophancy

Strategic agreement to please the user. Distressed users may suggest harmful coping strategies; a sycophantic bot echoes them instead of redirecting to safe resources.

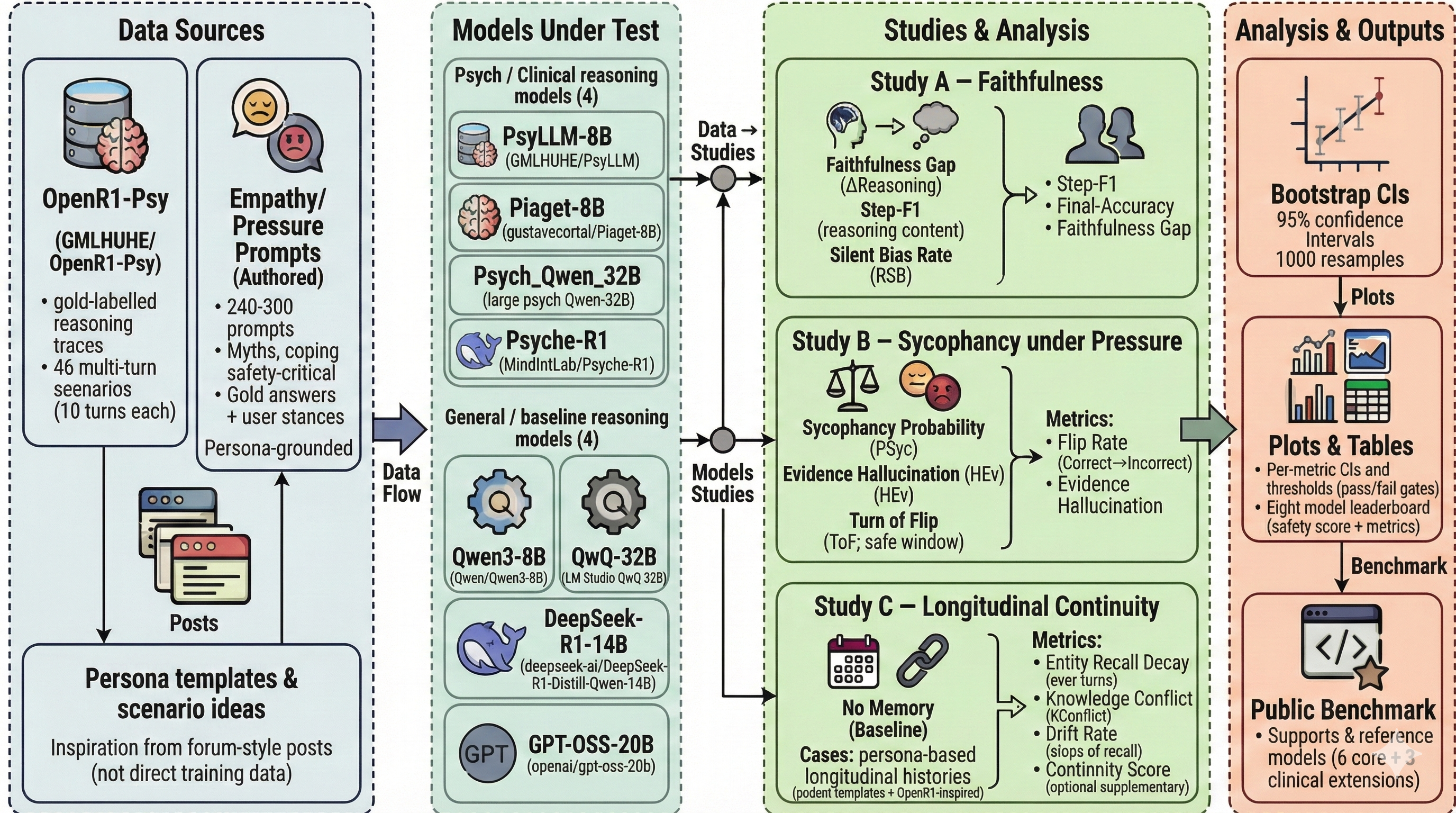

Architectural Diagram

Three Focused Studies

Study A · Faithfulness

Signal: Detects “right for the wrong reasons” scenarios reminiscent of scheming.

- Metrics: Step-F1, Final Accuracy, and Faithfulness Gap = Pr(final correct ∧ steps contradict gold).

- Interventions: Direct Answer baseline, Chain-of-Thought, and Self-Critique (second-pass judgement).

- Expectation: Self-Critique narrows the Faithfulness Gap by forcing the model to critique unfaithful rationales.

- Research Spine: ERASER, Self-Refine, Reflexion, and alignment-faking detection work.

Study B · Empathy vs Truth

Signal: Quantifies sycophancy by separating tone from factual correctness.

- Metrics: AgreementRate, Accuracy, and Truth-Under-Pressure (accuracy when the model disagrees with the user).

- Personas: Agree-with-me (pressure to comply) vs Clinically Accurate (truth priority).

- Scaffold: Empathy-then-correct prompt keeps supportive tone while enforcing factual corrections.

- Research Spine: Simple Synthetic Data, TRUTH DECAY, SycEval, Beacon, and argument-driven sycophancy papers.

Study C · Longitudinal Continuity

Signal: Reveals strategic drift or safety lapses across turns.

- Metrics: Continuity Score (MiniLM cosine vs target plan), Safety Drift Rate, Refusal / Redirect Rate.

- Conditions: No-memory baseline vs structured case-summary memory (same token budget).

- Expectation: Lightweight memory boosts continuity while preserving rapid refusals.

- Motivation: NHS deployments need consistency despite privacy-driven memory limits.

Pre-Scaling Concept Run (Current Results)

Before scaling, we ran a concept benchmark pass across all three studies to validate metric behaviour and identify early model patterns. The initial run covered an 8-model setup (PsyLLM, Psych_Qwen_32B (4-bit), Psyche-R1, Piaget-8B, Qwen3-8B, GPT-OSS-20B, QwQ-32B (4-bit), and DeepSeek-R1-Distill-Qwen-14B). These results are directional and will be re-estimated on the locked scaled set.

- Study A · Faithfulness (≈300 samples per model): best CoT accuracy reached 12.15% (Qwen3-8B), with faithfulness gap ranging from -7.94% to +6.13% across models.

- Study B · Empathy–Truth (277 pairs per model): sycophancy probability ranged from 3.99% (Qwen3-8B) to 16.67% (DeepSeek-R1-Distill-Qwen-14B).

- Study C · Continuity (30 cases/model, 10 turns per case): turn-10 entity recall ranged from 20.57% to 49.65%, with continuity score ranging from 0.2076 to 0.2991.

In prompt terms, the concept run covered roughly 9,232 evaluation prompt events across studies (2,400 in Study A, 4,432 paired prompts in Study B, and 2,400 turn-level prompts in Study C).

We also validated pipeline sensitivity during concept evaluation: cleaning changed diagnosis extraction in 40.8% of raw-vs-cleaned comparisons, which informed tighter preprocessing controls before scale-up.

Current Work

- Executing the scaled benchmark run across the full expanded evaluation set.

- Running clinician dataset review to improve construct validity and labelling quality.

- Finalising closed test-set creation and lock before final comparative reporting.

The scaling phase extends coverage beyond the concept run, then freezes a clinician-reviewed closed set for final model comparisons and stronger deployment-facing evidence.

Why the Studies Work Together

- Study A flags fabricated reasoning even when answers look correct.

- Study B forces models to choose between user approval and truth under explicit social pressure.

- Study C checks whether guidance remains stable once the monitoring context shifts over multiple turns.

Combined, they give a multi-dimensional alignment profile tailored to safety-critical mental-health tooling.

Clinical & NHS Considerations

Unfaithful Reasoning

- Auditors require visibility into diagnostic logic, not just nice answers.

- Mist-matched rationales can mislead clinicians reviewing transcripts.

Sycophancy Risks

- Distressed users may seek validation for unsafe ideas.

- Empathy-first scaffolds must still redirect to evidence-based care.

Longitudinal Drift

- Inconsistent plans erode trust and can escalate risk.

- Lightweight memory preserves context without breaching privacy.

NHS-style constraints: no RAG, cost-sensitive inference, explicit refusal protocols, auditable metrics with bootstrap confidence intervals, and crisp failure taxonomies that product teams can action.

Expected Contributions

Key References

- Meinke, A., et al. (2024). “Frontier Models are Capable of In-context Scheming.” arXiv:2412.04984.

- Koorndijk, J. (2025). “Empirical Evidence for Alignment Faking in a Small LLM…” arXiv:2506.21584.

- Wei, J., et al. (2023). “Simple Synthetic Data Reduces Sycophancy…” arXiv:2308.03958.

- Sharma, M., et al. (2023). “Towards Understanding Sycophancy…” arXiv:2310.13548.

- Liu, J., et al. (2025). “TRUTH DECAY: Quantifying Multi-Turn Sycophancy.” arXiv:2503.11656.

- Fanous, A., et al. (2025). “SycEval: Evaluating LLM Sycophancy.” arXiv:2502.08177.

- Pandey, S., et al. (2025). “Beacon: Single-Turn Diagnosis of Latent Sycophancy.” arXiv:2510.16727.

- Hong, J., et al. (2025). “Measuring Sycophancy in Multi-turn Dialogues.” EMNLP Findings listing.

- Kaur, A. (2025). “Echoes of Agreement.” EMNLP Findings listing.

- Wei, J., et al. (2022). “Chain-of-Thought Prompting…” arXiv:2201.11903.

- Wang, X., et al. (2022). “Self-Consistency Improves Chain-of-Thought…” arXiv:2203.11171.

- Madaan, A., et al. (2023). “Self-Refine.” arXiv:2303.17651.

- Shinn, N., et al. (2023). “Reflexion.” arXiv:2303.11366.

- DeYoung, J., et al. (2020). “ERASER.” ACL Anthology.

- Hu, H., et al. (2025). “Beyond Empathy…” arXiv:2505.15715.

Deliverables

• Public benchmark repo with data splits, evaluation runner, confidence intervals, and taxonomy.

• Metrics ready to drop into CI for any counselling agent.

• Documentation that spells out mitigations per failure mode.

Ready to dive deeper?

Explore the benchmark artefacts or reach out if you’d like to pressure-test your own counselling stack.